December 28th 2020

As some of you may already know… I’ve begun an Artificial Intelligence start-up (or software company) in 2016, with a specialization in psychological Ai and chat-bots.

And for me Artificial Intelligence is a passion, it’s a hobby, it’s not about the money and I enjoy doing it, plus it could change the world. Nevertheless, Steve Warswick (https://twitter.com/KukiChatbotDev) is the current world leader in chat-bot technology and you can talk to his chat-bot’s Mitsuku and Kuki on https://www.pandorabots.com/mitsuku/ and it’s pretty amazing software, with IBM Watson and Apple’s Siri probably ranking 2 and 3 on the free market, respectively.

Nevertheless, Steve’s bot is programmed with no psychological development whatsoever… which is something that I’m taking a step further with ArtificialIntelligenceProject.com but it’s taking me longer than I had anticipated to develop (*where today I’m in year 4, whereas it took Steve 9-10 years to finish Mitsuku). Regardless, I hope to surpass Steve’s work in 2023-2024. But, long story long… as I’d been creating this chat-bot over the last 4 years it had become very clear to me that both sound recognition and visual recognition, would eventually need to be implemented into the software, in order to create some sort of a robot. So to be specific, I’m focused on the brain with ArtificialIntelligenceProject.com but what’s a brain without eyes?

So… I began AiRobotVision.com in 2019, to compliment my chat-bot.

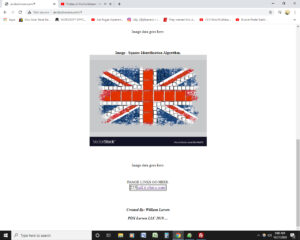

And in this image… we have a picture of a British flag and the Python code is breaking that image down into separate colors and then defining squares inside of each color, using an algorithm that I’ve been working on. *And this is where the project currently stands.

But to be more specific… rather boldly, I’ve started researching Python code in 2019, which can break down images by pixels… and then I started creating my own visual recognition software with the hopes of adding visual ques to my chat-bot, in future versions. Whereby, I’d ideally like to add this to my chat-bot in Version 3, although I’m still working on Version 2 of my first, “chat-bot,” which will not have a visual component until later on. Nevertheless, you can literally watch as I build that software on AiRobotVision.com, where I’m basically building the robot’s visual acuity in the public eye.

But how does it work? And what is the future of this technology?

Well, I’m using Python code to break the images uploaded to AiRobotVision.com down by their pixels… using HTML color codes and then I’m defining shapes through algorithms that work off of that color data. These algorithms I’ve written myself to define squares (at the moment) IE the example image above and then I’m using that recognition of, “shapes,” as inputs, to go along with the chat-bots text inputs, in future versions of my chat-bot. So I’m basically building a robot in my bedroom like Anakin Skywalker, as a hobby.

In real life.

So basically on AiRobotVision.com when you upload an image, it breaks down all the individual pixel’s color codes into matrix’s and then defines shapes using color. That’s where the project stands today anyway.

Well in the future… my hope is to add TEXT inputs or voice recognition… to that the chat-bot’s data can recognize inputs that are both visual and textual, thus giving the robot more data of it’s environment.

But the most important thing to code first, is the ability for the robot to define the dimensions of a room. So once I finish coding the visual software to define shapes and input that data of, “shapes,” into the chat-bot, simultaneously with text… I then plan on coding it to take 2 pictures at a time (like 2 eyes) and to then judge DISTANCE AND SPACE, by the comparing the data of the 2 images.

So this is how it’s going to work… AiRobotVision.com will allow 2 camera’s positioned at a fixed point… like eyeballs, to take 2 pictures at a time… with 2 separate camera’s roughly 2 inches apart, like human eyes. Then when the images break the shapes down, using Python code, which I’m coding now, the software will take the shapes from both images, compare there distances and then judge the dimensions of the room.

And I plan on making that software free, one day.

You’re welcome.

Nevertheless, how far are; Apple, FB and Google, in terms of developing this type of software? Well let’s just say that I can talk to my Instagram Ai and I can already tell that they already have that technology but they’re not releasing it.

-William Larsen, CiviliansNews.com founder and part time superhero.